AssignmentGPT Blogs

If you have been following the AI space at all, you already know that Anthropic does not release models just to keep up with the crowd. Every new version of Claude Opus 4.6 comes with a clear purpose. And this one? It is designed to dominate coding and complex reasoning like no model before it. I have spent time testing it, reading the benchmarks, and watching how it handles the kind of tasks that actually matter to students, developers, and professionals. If you want to see how it stacks up against the competition, our Claude vs ChatGPT comparison covers that in detail.

In this review, I am going to walk you through what is new, what works, what does not, and - most importantly - whether it is worth your money. Whether you are a college student building your first app or a developer managing large codebases, this guide will give you the honest picture.

What Is Claude Opus 4.6?

The Opus line is Anthropic's top tier - built for people who need serious performance, not just a chatbot that gives decent answers. Claude Opus 4.6 is the newest and most capable model in this lineup, launched in early 2026. It focuses on one thing above everything else: making reasoning sharper and code generation cleaner.

Think about it this way. If Claude Sonnet is a smart college graduate, Opus is the senior engineer who has seen everything and can plan five steps ahead. It handles long, multi-step tasks, retains context across enormous documents, and adapts how hard it thinks based on what the problem actually demands.

It is aimed at developers, researchers, enterprise teams, and technically advanced students. It is not for casual use - and Anthropic is honest about that. If you need raw power for complex work, this is the model built for it. To understand the broader landscape of what AI agents are and how they fit into this picture, that background is worth having before diving deeper.

Core Innovations and New Features

Here is where it gets genuinely exciting. Opus 4.6 is not just a small bump over its predecessor. Several of its new capabilities represent real changes in what AI models can actually do day-to-day.

1M Token Context Window (Beta)

This is massive. Claude Opus 4.6 supports up to 1 million input tokens in beta, with 128K output tokens. In plain terms, you can feed it an entire codebase, a long research report, or months of meeting transcripts - and it holds all of it in memory while responding. Most competing models cap out at 128K input tokens. The difference in real-world use is enormous.

Adaptive Thinking - Low, Medium, High, or Max

Here is something interesting: you can literally tell Opus how hard to think. The four effort levels - Low, Medium, High, and Max - let the model allocate the right amount of compute for the task. Ask it a quick factual question? Low is fine. Designing a distributed system? Max thinking kicks in and the model works through the problem far more carefully.

This is not a gimmick. In my experience, models that burn heavy compute on simple tasks are expensive and slow. Adaptive thinking gives you control - and that matters when you are paying per token.

Agent Teams in Claude Code

Claude Code now supports Agent Teams - multiple Claude agents that coordinate on different parts of a project at the same time. Think of it as a small engineering team inside your IDE, where one agent handles planning, another writes code, and another reviews it. The rise of AI in coding has made features like this increasingly practical for real development workflows. For students working on complex final projects or developers managing large repositories, this is genuinely useful.

Context Compaction for Infinite Conversations

Long conversations used to hit a wall when the context window filled up. Context compaction intelligently summarizes earlier parts of the session, so the conversation can continue without losing critical information. For multi-hour coding or research sessions, this is a quiet but important upgrade.

Benchmark and Real-World Performance

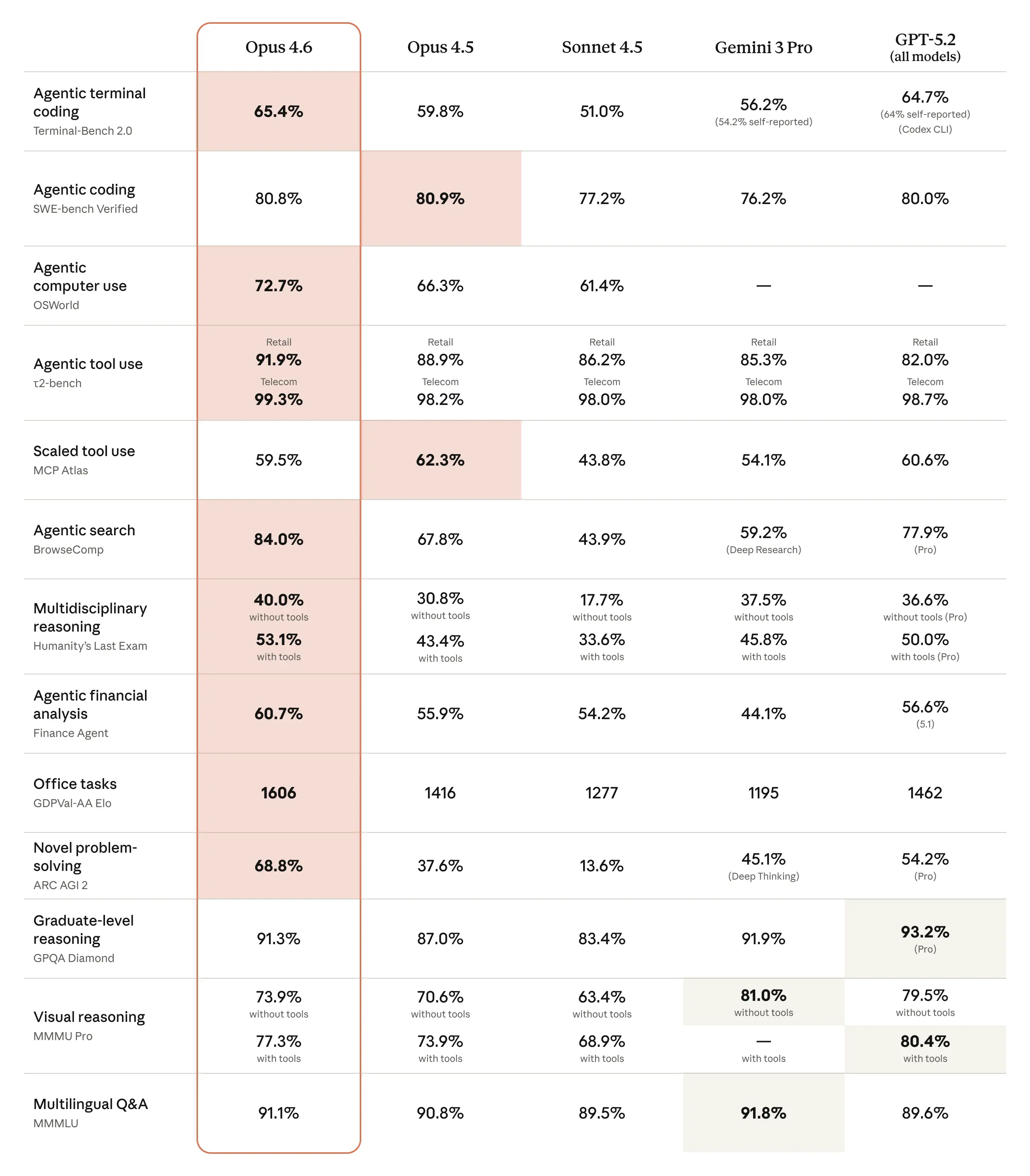

Numbers do not always tell the full story. But when a model leads across every major benchmark, it is worth paying close attention. Here is where Claude Opus 4.6 stands:

Numbers do not always tell the full story. But when a model leads across every major benchmark, it is worth paying close attention. Here is where Claude Opus 4.6 stands:

- Terminal-Bench 2.0: 65.4% - ranked #1 industry-wide for autonomous terminal task completion.

- ARC-AGI 2: 68.8% - nearly double the score of Claude Opus 4.5, signaling a dramatic leap in abstract reasoning.

- GDPval-AA: 1,606 Elo - 144 points ahead of GPT-5.2, the closest competitor.

- SWE-bench Verified: 80.8% - meaning it resolved more than 4 in 5 real-world GitHub software engineering issues correctly.

Here is the reality: SWE-bench is not a hand-crafted test. It uses actual, unsolved GitHub issues. An 80.8% score means the model can walk into a real codebase and fix real bugs. That is the number that matters to developers.

The ARC-AGI 2 improvement deserves a special mention. Nearly doubling on an abstract reasoning test between versions is not normal. It suggests Anthropic made fundamental changes to how the model reasons - not just how it retrieves answers. For a broader look at how large language models work, that context helps explain why this kind of leap matters.

Hands-On Testing: 4 Real Workflows

Benchmarks tell you what a model can theoretically do. What I care about is how it holds up when you actually sit down and use it. I ran four tests designed to push Claude Opus 4.6 on tasks that matter in the real world.

Multi-Step Agent Workflow

I asked Opus 4.6 to plan and build a small SaaS analytics dashboard - broken into phases: requirements gathering, system design, database schema, backend API, frontend architecture, and deployment plan. For each phase, it had to produce deliverables, identify risks, and suggest mitigations.

The result was impressive. The dashboard was reactive, had a responsive design, and every phase came with concrete, actionable output. The risk identification was grounded - not generic filler. For prototyping and concept work, this level of structured planning in a single response is rare.

Code Refactor and Feature Expansion

I handed it a messy legacy codebase and asked it to refactor everything into clean architecture, while adding JWT authentication, password hashing, structured logging, persistent database storage, a REST API, and unit tests - all at once.

It took a while. Long enough that the interface asked if I wanted a notification when it finished.

It was completely worth the wait. The output included multiple modular files, each serving a clear purpose. The architecture documentation explained every decision. The code was clean, well-commented, and met every requirement I had set. I have seen junior developers deliver less on this kind of task. Students looking to sharpen their own skills can also check out these best websites for beginners to practice coding alongside using a model like this.

Algorithmic Reasoning Under Constraints

I asked it to design and implement an efficient system to detect duplicate files across millions of records - with memory limited to 2GB, horizontal scaling required, and a working Python prototype expected.

The response was thorough. It covered each system component clearly, justified the tradeoffs, and the prototype was sound. What stood out was that the justifications were not generic. It reasoned through why specific choices made sense under the stated constraints - which is exactly what you want from a reasoning model.

Windows System Debugging

I described a real problem - intermittent system freezes, BSODs, Chrome crashes, and a PC that stopped booting until I removed a RAM stick. I asked for a ranked list of probable root causes, diagnostic steps, and a repair decision tree.

The response was grounded and genuinely helpful. It ranked causes by likelihood, gave specific Windows tools and commands to run, and structured the troubleshooting in a way that matched what someone who actually knows hardware would say. It covered things I had missed after weeks of troubleshooting myself.

Claude Opus 4.6 vs GPT-5.2 vs Gemini 3.1 Pro

Here is a quick comparison across the features that matter most:

| Feature | Claude Opus 4.6 | GPT-5.2 | Gemini 3.1 Pro |

|---|---|---|---|

| Context Window | 1M tokens (beta) | 128K tokens | 1M tokens |

| SWE-bench Verified | 80.8% | ~72% | ~68% |

| ARC-AGI 2 | 68.8% | ~55% | ~60% |

| Adaptive Thinking | Yes (4 levels) | Limited | No |

| Agent Teams | Yes (Claude Code) | No | No |

| Output Tokens | 128K | 16K | 8K |

| Input / Output Pricing | $5 / $25 per M | $7.50 / $30 per M | $3.50 / $10.50 per M |

| Best For | Coding, reasoning, enterprise | General use, chat | Multimodal tasks |

The verdict: For coding and multi-step reasoning, Claude Opus 4.6 wins clearly. GPT-5.2 is stronger for general conversation and broad use. Gemini 3.1 Pro is more affordable for multimodal tasks. But if your primary need is code quality and complex problem-solving, Opus is the obvious choice. You can also read our detailed Gemini 2.5 Pro vs Claude 3.7 Sonnet breakdown for a deeper model-to-model comparison.

Enterprise and Professional Use Cases

Large organizations are already putting Claude Opus 4.6 to work in serious production environments. Here is how real teams are using it:

- Finance: Rakuten is using Claude for customer support and financial analysis workflows that require precise, context-aware responses.

- Investment research: Norway's sovereign wealth fund has integrated Claude into its research and document review pipelines.

- Software development: Cursor and Windsurf, two of the most widely used AI coding environments, have integrated Opus 4.6 directly into their platforms.

- Legal and compliance: Teams are using it for contract review, regulatory analysis, and summarizing lengthy legal documents with high accuracy.

The common thread across all of these is long-context performance and reliability. Organizations trust Opus 4.6 because it holds up over extended tasks without losing track of what matters. For students curious about how AI is reshaping professional environments, our guide on AI agents in education shows how this shift is playing out in academic settings too.

Safety, Reliability, and Trust

Anthropic was founded with safety as its core mission - and that shows in how Claude Opus 4.6 is built. The model is trained using Constitutional AI, which means it has a set of guiding principles baked into its responses, not just content filters bolted on afterward.

On the compliance side, Anthropic meets ISO 27001, SOC 2 Type II, and HIPAA standards. For students using it through educational platforms, and for enterprises handling sensitive data, this matters. If you are wondering about the ethical side of using these tools academically, our article on whether using AI is cheating gives a balanced, practical take on that question.

The model is also calibrated to be honest about what it does not know. In my testing, it flagged uncertainty rather than confabulating answers - which is exactly the behavior you want from a tool you are depending on for research or production code.

Claude Opus 4.6 Pricing and How to Access It

Let me show you exactly what you are looking at cost-wise, and how to get started.

Pricing at a Glance

- Input tokens: $5 per million tokens

- Output tokens: $25 per million tokens

- Pricing is the same as Claude Opus 4.5 - but note that Opus 4.6 consumes roughly 5x more tokens per task due to deeper reasoning. Real-world API costs will be higher.

Is Claude Opus 4.6 Free?

Claude Opus 4.6 is not available on the free plan. It requires a paid subscription or API access. If you are a student looking for capable tools that fit a tighter budget, our roundup of the best AI tools for students covers a range of free and affordable options worth considering.

Access Options

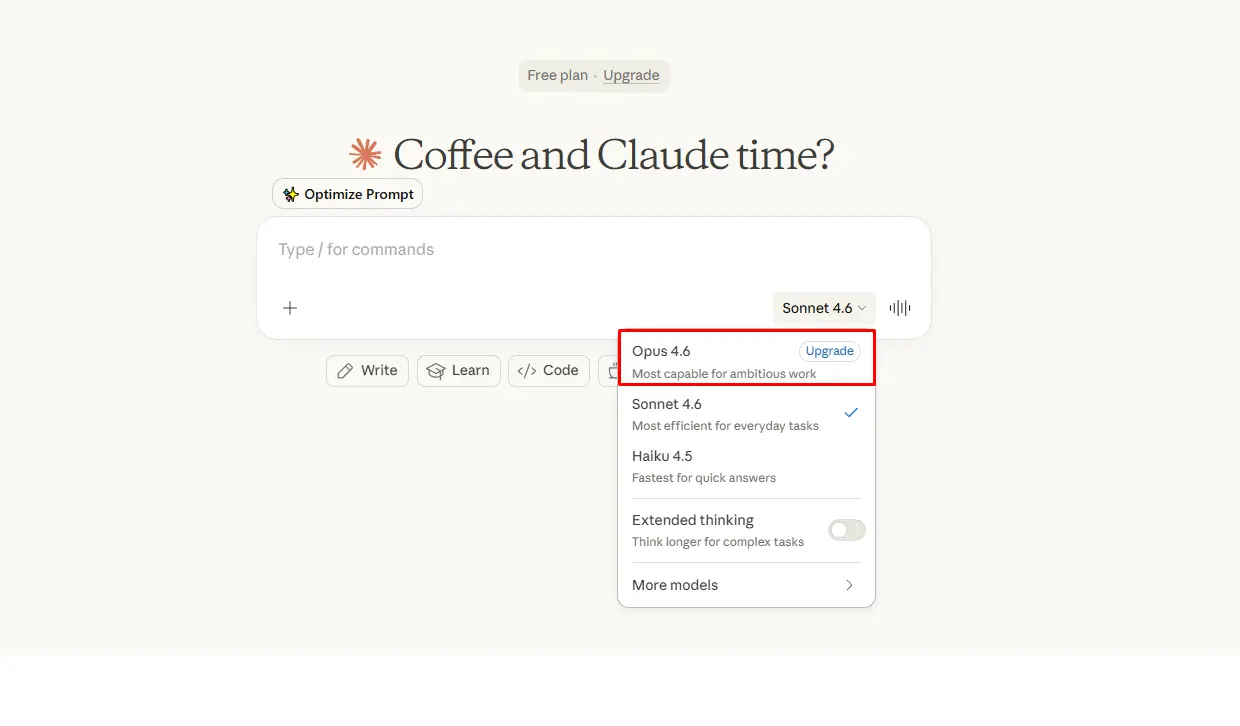

- Claude Pro / Max / Team / Enterprise: Access through claude.ai with a paid subscription. Pro starts at $20/month.

- API access: Available through the Anthropic Developer Platform with usage-based billing at the rates above.

- Cloud integrations: Available through Cursor, Windsurf, and other developer platforms that integrate Anthropic models.

How to Access Step-by-Step

- Go to claude.ai and sign up or log in.

- Upgrade to Pro, Max, Team, or Enterprise from the settings menu.

- Select Claude Opus 4.6 from the model selector at the top of the chat.

- For API access, visit console.anthropic.com, create an account, and generate an API key.

Is Claude Opus 4.6 Worth It? (Bottom Line)

Here is my honest take.

If you are a developer, researcher, or advanced student who regularly works on complex coding tasks, multi-step reasoning problems, or large document analysis - Claude Opus 4.6 is worth the cost. The benchmark leads are real, the hands-on performance backs them up, and the adaptive thinking feature alone makes it more efficient than running a single-speed model at full power every time.

If you are a casual user or student who mostly needs writing help, summaries, or light coding support - stick with Claude Sonnet. It is faster, cheaper, and more than capable for everyday work.

Claude Opus 4.6 is not trying to be everything. It is built to be the best at the hard stuff. And from what I have seen, it delivers on that. For students who want to get more out of AI tools across their coursework and projects, our guide on how AI can boost your assignment grades is a practical next read.

FAQs

1. What is Claude Opus 4.6?

2. When was Claude Opus 4.6 released?

3. Is Claude Opus 4.6 free?

4. What is Claude Opus 4.6 pricing?

5. How do I access Claude Opus 4.6?

6. How is Claude Opus 4.6 different from Claude Opus 4.5?

7. What is the context window of Claude Opus 4.6?

8. How does Claude Opus 4.6 perform on coding tasks?

9. What is adaptive thinking in Claude Opus 4.6?

10. Can Claude Opus 4.6 be used through the API?

11. Is Claude Opus 4.6 better than GPT-5.2?

12. What is Agent Teams in Claude Code?

13. What industries are using Claude Opus 4.6?

14. Is Claude Opus 4.6 safe and compliant for business use?

15. Should I use Claude Opus 4.6 or Claude Sonnet?

Digital Marketer | SEO

I’m Dipak Dangodara, the SEO Expert at AssignmentGPT AI. I manage and optimize the website’s search engine presence with a strong focus on organic growth, visibility, and performance. My work includes technical SEO, keyword research, on-page and off-page optimization, and tracking SEO performance to align with search engine best practices.

At AssignmentGPT AI, my goal is to build sustainable rankings, improve traffic quality, and ensure the platform delivers long-term value through effective SEO strategies.

Master AI with

AssignmentGPT!

Get exclusive access to insider AI stories, tips and tricks. Sign up to the newsletter and be in the know!

Transform Your Studies with the Power of AssignmentGPT

Empower your academic pursuits with tools to enhance your learning speed and optimize your productivity, enabling you to excel in your studies with greater ease.

Start Your Free Trial ➤Start your success story with Assignment GPT! 🌟 Let's soar! 🚀

Step into the future of writing with our AI-powered platform. Start your free trial today and revolutionize your productivity, saving over 20 hours weekly.

Try For FREE ➤